Today’s wrong question is: “Do you hate A.I.?”

This is the wrong question because it turns out the answer is most likely YES.

The data’s pretty clear right now, and it’s… intense.

A March 2026 NBC News poll found only 26% of registered voters view A.I. positively, which means it’s less popular than ICE. Quinnipiac found 55% of Americans think A.I. will do more harm than good, up 11 points in a single year. And Gallup found that only 18% of Gen Z, one of the generations using A.I. the most, feels hopeful about it. 31% of Gen Z say they’re angry about A.I.

So, statistically, you really might hate A.I.

Which I think means a better question is: “WHY do you hate it?”

That’s a question we can actually do something with.

This Train Ain’t Stopping

So, we’ve got a lot of A.I. haters out there. You might be one of them. Or maybe you’re just curious what all the hate is about! Either way, let’s get to the bottom of it. Because the anger isn’t just showing up in polls. It’s now showing up in the streets.

A few weeks ago, someone threw a Molotov cocktail at Sam Altman’s house in San Francisco. An Indianapolis city councilman who voted to approve a data center rezoning woke up to more than a dozen gunshots fired at his front door and a note that read: “No data centers.” Cities like mine, Denver, and states across the country are passing moratoriums. Bernie Sanders and AOC have proposed a data center pause at the federal level.

Feels clear to me that data centers are the most visible representation of A.I. we’ve got, so they’re becoming something of a flashpoint for this conflict.

And this anger is also showing up… in my mailbox.

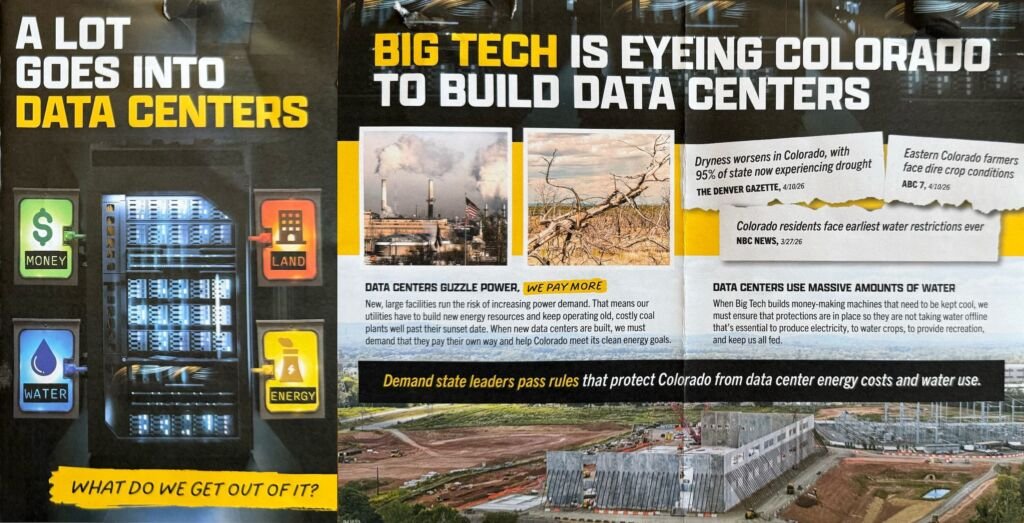

I got this pamphlet sent to my house in Denver last week, paid for by a clean energy advocacy group, addressed to me personally:

The front says: “A lot goes into data centers. What do we get out of it?”

The inside lays it out with two big points:

- Data centers guzzle power, which means utilities keep aging coal plants running past their sunset dates and pass those costs on to us, and

- Data centers also guzzle water for cooling, and in a state like mine, where we’re already facing water restrictions, this is a legitimate concern.

Let’s quickly address these two issues.

I hear a lot about this water thing. But I think most people don’t know: the technology to fix the water problem already exists. Microsoft is building closed-loop systems that recycle water continuously without drawing from local supplies. And states like South Carolina and Kansas are both working on legislation to require this kind of closed-loop system for new data centers. This is a governance issue; businesses won’t just “opt-in” to these extra costs, we need to make it mandatory. We could, and we should.

The coal problem has a similar fix: we just don’t let data centers plug into our grid and leave customers holding the fossil fuel bill. We make them build new clean energy to cover the new capacity they need. This is perfect way to accelerate the clean energy we need for a Star Trek path! And it turns out, Colorado already has a proposed bill for this, it just needs to pass.

So this fear-mongering rhetoric… it’s out there a lot. Some of it has a real basis in truth, but we can’t let ourselves get sucked into the fear vortex, we gotta keep doing our research.

That said, I do understand the fear and the anger. I’ve talked at length on this show about a bunch of really big challenges with A.I.

I’ve discussed how A.I. might break the global economic Loop That Holds Up The World, how A.I. is likely to be part of the coming Fourth Turning Crisis, how A.I. succeeding is the only real option we have to save us from our global debt problem, and how A.I. is likely to be the thing that eventually destroys the health care social contract in the U.S.

That’s a lot of really complex problems. I absolutely, 100% understand why A.I. is making us nervous.

But here’s the other thing I am reasonably certain about: no matter how you or I feel about it, this train ain’t stopping.

This has been my position on this show since the beginning, and it’s why I talk about A.I. as much as I do. If we’re on this train, we need to understand how it works and where it’s taking us.

And if you’re upset, that’s reasonable, but Molotov cocktails aren’t going to fix anything. And ultimately, neither will data center delays — not in the macro sense. If you vote a data center out of your town, tech companies will just build it in the next county. Or state. Or Canada. Or frickin’ space, apparently. The capital on this is too committed and the momentum is too established.

Getting angry at A.I. isn’t going to be a winning strategy. Our anger needs a better target. And frankly, a better plan. So let’s find one.

We’re All Luddites Now

You’ve probably heard the word “Luddite:” basically, a scared, backwards-looking, anti-technology peasant who can’t handle progress.

Well, that’s what it means now. That’s how I’ve always used it. But it wasn’t always this way.

Here’s who the Luddites actually were.

It’s 1811. England. The Industrial Revolution is underway. A group of textile workers in Nottingham begin a campaign of organized machine-breaking, targeting the automated looms and knitting frames displacing their labor. They called themselves “Luddites,” followers of the mythical “General Ludd,” a kind of Robin Hood figure for the industrial age.

To this day, nobody really knows if General Ludd was a real person, but it didn’t really matter. He was a symbol of the idea that workers deserve a say in how transformative technology reshapes their lives.

These were not ignorant peasants. They were skilled, trained, middle-class artisans. Weavers who had spent years mastering their craft.

And what many people don’t know is: before they broke a single machine, they tried every polite option available to them.

In 1807, 130,000 workers signed a petition and sent it to Parliament demanding a minimum wage. 130,000 signatures! Parliament rejected it, mostly because the MPs voting it down were the same factory owners who would have had to pay the wage. They asked for pensions for displaced workers. They asked for minimum labor standards. They requested that if the machines were going to take their jobs, the gains should at least be shared.

They were ignored.

So in March 1811, they changed their methods… and picked up sledgehammers.

They raided factories at night, targeting only the owners who were cutting wages and ignoring their demands. In the early period alone, Luddite bands conducted at least a hundred separate attacks, destroying roughly a thousand machines. They were organized, strategic, surgical.

The government’s response was savage. Machine-breaking was made a capital offense. At least 12,000 militia-men flooded the textile counties, and in a painful twist of irony, many of these troops were probably unemployed weavers. In 1813,17 Luddites were executed. Others were transported to Australia. The movement was crushed, not by argument, but by state force.

And over time, “Luddite” got redefined from “worker demanding fair terms in the face of displacement” to “idiot afraid of progress.” Nobody even had to organize the smear campaign. The word just got quietly repurposed during the computer revolution, and by the time it landed in our dictionary, the labor movement it came from had basically been written out of the definition.

Since learning this, I’ve come to a rather strange realization… I think I’m a Luddite.

Not in the redefined way, of course… in the original sense.

I believe A.I. is transformative, it’s happening, and in many ways it’s extraordinary. I just want to know: who gets the gains? I want the terms renegotiated. I want workers to have a seat at the table when decisions get made.

That’s the Original Luddite position: workers deserve a say in how transformative technology reshapes our lives.

And looking at the polling, looking at the Molotov cocktails, looking at Gen Z’s anger… I wonder if this isn’t the real source of our fear.

Here’s my theory: I think most of us are “Original Luddites” right now.

We aren’t really anti-technology — I mean, just look how much we love our freaking phones — but we are anti- a whole bunch of things that surround A.I. right now.

We are anti-concentrated gains, but we can see the inequality gap widening with every OpenAI fundraise.

We are anti-job displacement, but our leaders don’t have a plan.

We are anti-crappy labor standards, but our gig economy already treats workers as disposable, and A.I. is about to turbocharge that.

We are anti-surveillance, but A.I. tools wants access to everything we do.

We are anti-propaganda, but A.I. is making it nearly impossible to know what’s real.

We are anti-powerlessness, but nobody asked us if we wanted any of this.

We are anti-oligarchy, but the people building this technology aren’t elected and aren’t really accountable.

We are anti-being-replaced, but tech CEOs are literally on record saying that’s the plan.

And there’s one more big fear I’m not going to get into today: we’re clearly getting increasingly concerned that A.I. is eroding our ability to think, learn, and be creative. That one deserves its own episode; we’ll talk about it next week.

But generally speaking, I think all these fears are triggering a bunch of generational, “body keeps the score”-kind of response somewhere deep in our DNA that’s making our collective “spidey sense” get massively triggered by A.I. and its most visible proxy: data centers.

I think most of us are Luddites. We just didn’t know that was the word for it… I suppose because it’s not, anymore.

Here’s what history shows, across every major economic transition: the gains go to the top first. The workers being displaced resist and get called irrational. Eventually, after enormous suffering and protest and pain, government intervenes and forces redistribution. And then everyone acts like that outcome was inevitable all along.

The Industrial Revolution. Child labor. Women’s suffrage. Worker safety conditions. Same pattern, every damn time.

But is it possible that this time, enough of us know better?

I would like to think this is a possible positive outcome of us being drowned in information constantly. Maybe, this time around, in this Chaos Window, because enough of us see the historical patterns, we can find a way to shorten the brutal conditions that always accompany this kind of societal transition.

But as far as I can tell, the only thing powerful enough to do this is government.

Which obviously brings us to another problem.

Seriously Forked

I don’t often skewer other podcasts, but when I do, it’s for a good forkin’ reason.

Hard Fork is a New York Times technology podcast hosted by Kevin Roose and Casey Newton. These are two of the sharpest tech journalists working today. Smart, well-sourced, intellectually honest, fun to listen to. In their recent episode about the A.I. backlash, they got closer to the real answer than almost anyone in the mainstream media I’ve heard.

And then they fumbled it. Twice.

Around the 28-minute mark, Kevin correctly identifies that the real solution to the A.I. backlash is described in OpenAI’s recent paper that mentions Shared Wealth Funds and more adaptive safety nets.

But then Casey responds: “That seems to require America to turn into Europe overnight.”

Kevin — who just articulated the right answer, mind you — immediately retreats.

I don’t know why these two smart people blinked in that moment. But I want to call it out, because it really frickin’ matters.

This is a kind of “preemptive surrender” — calling the right answer impossible before anyone even really tries. This is exactly how the status quo protects itself without having to lift a finger.

And it’s actually even stupider, because this answer actually doesn’t require the U.S. to become Europe at all. It just requires us to remember the great things we’ve already done.

In the late 1920s, nearly 90% of rural U.S. households had no electricity. Private utilities had zero interest in changing that — there wasn’t enough profit in running lines to farms and small towns. The technology existed. The need was obvious. The Market simply had no incentive to care. Then FDR’s team decided this transformative technology was essential for everyone to have. The Rural Electrification Act passed in 1936. Two decades after, rural electrification had gone from 3% to 90%.

Turns out, we are very good at sharing the wealth when the right incentives are in place.

That wasn’t “the U.S. turning into Europe.” It was the U.S. just being a united frickin’ group of states, caring about each other, and deciding that some things matter too much to leave to The Market.

When Casey and Kevin let this convo just move on, I thought I couldn’t be more disappointed in them.

But, I was wrong… because they did it again, immediately.

As I mentioned, a couple minutes earlier in the show Kevin talked about the OpenAI paper that calls for a public A.I. wealth fund, a mechanism where every citizen would get a stake in A.I.’s economic upside.

Basically, an Alaska-style dividend like we’ve been talking about on this show since Episode 1.

Kevin says he’d be happy if one member of Congress was reading that paper. Casey makes a genuinely valid point: OpenAI is simultaneously backing politicians who would never support wealth redistribution, and lobbying against the very A.I. regulations it claims to want. The hypocrisy is real. An amazing point!

But then, they just… drop it, send up some kind of “podcast prayer” for new politicians, end the segment and move on to the next one.

Forgoodnesssake, guys! Don’t let the hypocrisy of the messenger kill the message.

The Alaska Permanent Fund — which has paid every Alaskan resident an annual dividend from oil revenues since 1982 — didn’t happen because oil companies had a change of heart. It happened because wise Alaskan leaders like Republican Governor Jay Hammond had the foresight to enshrine it in the state’s Constitution on the front end. They didn’t need Exxon to become a saint, they just needed good governance in place.

This kind of thing is not a Market-based outcome. It’s a civic design choice.

Remember, too: an A.I. Shared Wealth Fund isn’t re-distribution, like taxes. It’s pre-distribution, which builds shared ownership into the structure before the value concentrates.

The Luddites asked for this in 1811. FDR built the electricity version of this in 1936. Alaska, and tons of countries around the world, have been running the oil version of this for decades. And OpenAI basically endorsed the A.I. version last month.

I don’t care if any OpenAI executives actually mean it. We don’t need the tech CEOs to mean it. We need enough of us to demand it.

And we need more elected officials with foresight like Jay Hammond.

Don’t Give Up On Government

Speaking of elected officials, let’s talk about government.

I know, I know! Right now “the government” feels like a punchline… at best. The ineptitude is off the charts. Our collective trust in government is at historic lows. I know all of this. I see it every day, just like you do. I doomscroll and curse at the headlines, just like you do.

But there’s a critical difference between “the government is currently screwed up” and “government itself is broken beyond repair.”

The first one is just… Tuesday. But if we drift into “broken beyond repair” territory, we’re giving ourselves an excuse to give up.

My friends, we cannot give up. Let me tell you why.

First, you gotta remember: this is the part of the Fourth Turning cycle where we always lose faith in government. We are right on time! The old systems have to break and die so the new things can be born.

Second, there is exactly one institution on earth powerful enough to rein in the A.I. tech bros, venture capitalists, and billionaires. It’s not your employer. It’s not a nonprofit. It’s not a newspaper. And sadly, it’s not even a great podcast.

It’s a democratically-elected, accountable-to-the-people, can-actually-pass-laws government.

Before you balk at this, let me remind you: the people currently capturing the gains from A.I. aren’t confused about how power works. They’re hiring lobbyists, funding campaigns, flying to Washington, having dinners with senators. They are playing the game with everything they’ve got.

But a lot of us are sitting on the sidelines saying “Ugh, what’s the point. Government is worthless.”

That asymmetry is not an accident. Learned helplessness is the cheapest and most effective political strategy ever frickin’ invented. Those in power don’t need to silence the masses if they can convince them their voice doesn’t matter.

Please, don’t fall for this.

South Carolina and Kansas are working on zero-water-waste data center laws. Colorado has a bill to make data centers build their own clean energy. Alaska baked an oil dividend into its constitution and has been writing checks to every resident for over forty years. The democratic machinery, rusty and imperfect as it currently is, still moves when enough of us push it.

Our system isn’t perfect. But it’s still functional enough, and it’s worth reforming because that’s our best shot at creating the future we want. If enough of us show up, things actually can change. We have done it before. We can do it again.

And seriously: we need more Jay Hammonds — elected officials with foresight and thoughtfulness. The only way we get them is to find them, support them, elect them, be them.

The new rules are being written right now by whoever shows up to write them. Please, my friends, we need you to show up.

Leadership Lens

Here’s today’s Leadership Lens.

Your people are probably lying to you about A.I.

Not maliciously. They’re just doing what workers have always done when they sense the boss has already decided… they go along. They show up to the training. They say the right things in the meeting. They put the tool on their desktop. And then they quietly use it as little as possible, waiting for it to blow over like a fad or providing “performative compliance” while privately feeling like the polls we opened with today.

Remember those? 55% of us think A.I. will do more harm than good. 31% of Gen Z, your youngest workers, are flat-out angry about it. Our heaviest users might actually have the most negative feelings. Those feelings don’t disappear when they walk through your office door. They walk in with your people.

This means the most useful thing you can do as a leader isn’t another A.I. training, more tools to try, or a productivity mandate. It’s creating a genuinely psychologically safe place to ask them: “How are you actually feeling about this?” And then truly welcoming the answer.

The leaders who treat their people as participants in this transition rather than passengers along for the ride will build something that’s more powerful than the backlash. As my mentor Terry says: “People only support what they help create.”

So, bring them in. Let them co-create. Everything will work better if you do.

The Optimistic Rebellion

For all my friends, here’s three Optimistically Rebellious things you can do right now.

First — let’s reclaim the “Luddite” word. The next time someone uses it to mean “person afraid of tech” tell them what it actually means: it’s not about the tech itself, it’s about who gets the gains from the tech. I think this is an easy way to invite people into a deeper conversation.

Second — find your Jay Hammond. Somebody in your community is running for something right now and they actually get this. They’re probably not famous. They might be running for state legislature or city council or school board. Go find them and support them. If you need a place to start looking, I recommend checking out the Working Families Party at workingfamilies.org/candidates/

Third — talk about WHY we’re actually afraid of A.I. You know it’s going to come up! At dinner. At work. With your skeptical uncle. The A.I. backlash is real and growing but most people don’t have clarity about their own fear or a plan for what to do about it. You now have ideas for both.

Remember, the Original Luddites had 130,000 signatures but no real lever to pull. You have something they didn’t: a system that still responds, however imperfectly, to enough people pushing in the same direction.

Let’s show up however we can while we still have time to negotiate what’s next.