🚨 If you’d rather WATCH or LISTEN TO this article, you can!

📺 YouTube // 🎧 Spotify // 🍏 Apple

Today’s wrong question is this: “Can we prevent the A.I. crisis?”

I’m seeing this question everywhere. In the comments on my videos. In headlines and in think pieces. In worried conversations at conferences.

People have very different ideas about what “the crisis” even means. Some think it’s A.I. replacing workers entirely. Others think it’s wealth concentrating until we’re living in techno-feudalism. Some think it’s… well, even darker than that. (We’ll get there.)

But they’re all asking the same underlying question: Can we stop this?

Here’s what I need to tell you: probably not. History suggests we don’t usually “prevent” crises (at least not the kind I’m talking about), and more importantly, we actually don’t want to.

But — and this is crucial — we can shorten their pain.

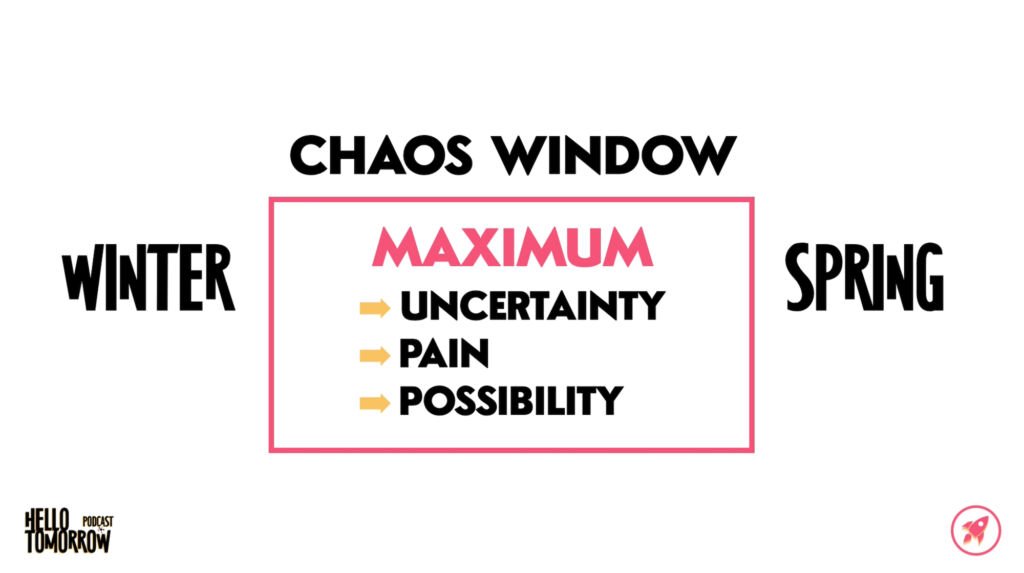

Today I’m going to show you why “prevention” is the wrong goal, introduce you to something I call the Chaos Window, and explain how the work we’re doing right now could save a generation of humans from decades of unnecessary suffering.

A Veritable Smorgasbord Of Crises (Yum)

A few weeks ago, I released an episode called “Who Buys Your Stuff, Robots?”

It asked: If A.I. replaces workers and workers lose income, who buys all the stuff the economy produces?

On my YouTube Channel, that episode is still growing, currently with 200,000 views and over 5,000 comments, and I do my best to read every comment.

In those comments, I think I’ve seen every version of the A.I. crisis people are worried about. Let’s explore the 3 most popular theories!

“Robot” Solution #1: B2B

The first category of “solution” to my question of “Who buys your stuff, robots?” is basically B2B.

“Josh, B2B can replace B2C. Businesses will sell to other businesses. Problem solved. Boom. Done!”

This makes sense on the surface, because B2B is big. Really big. About twice the size of consumer spending in the overall economy. So I get why people think it could just replace B2C.

But here’s the thing: B2B is bigger because it feeds B2C.

Steel companies sell to car manufacturers. Car manufacturers sell to you. If you can’t buy cars, manufacturers don’t need steel.

So the challenge with this argument is that B2B isn’t independent, it’s derivative. Follow any B2B chain far enough, you hit consumers. That’s where value gets consumed. Remember The Loop! At some point, someone has to buy the thing. To consume it. To want it.

If that final consumer doesn’t have income, the whole chain contracts backward.

B2B can’t replace B2C because it depends on it. A B2B “solution” is really just pushing the same problem upstream… and eventually it ends up in the same place.

So, no, we can’t solve the coming A.I. Crisis with B2B. It was a great hypothesis, though!

“Robot” Solution #2: A.I. Economy

The second category of solution is wilder…

“Josh, A.I. agents will buy, sell, pay taxes, invest. They’ll do everything we do. The economy will thrive without human participants.”

Okay. Let’s dig into this one.

These comments are describing a fully closed “machine economy.” A.I. agents as both producers AND consumers. Value circulates internally. Humans just exit The Loop.

That’s not capitalism as we know it, of course, but what we might call a “synthetic economy.” And honestly? It’s not impossible. We could technically build that.

So the question with this one isn’t: “Can this happen?” The question is: “To what end?”

If A.I. agents are buying from other A.I. agents, investing in assets owned by other A.I. agents, paying taxes to governments that serve A.I. agents, then value has become completely detached from the reason any of us care about money in the first place.

In our world, money only means something because it represents other things: status, access to scarce resources, control over labor, fulfillment of needs. But if the “robot buyers” don’t actually need any of those things, then the money transactions are just… bookkeeping. This wouldn’t really be an economy, it would just be an accounting ledger talking to itself.

The bigger question in this scenario is: why do we produce things at ALL?

Because humans want things. We compete for status, we buy things to try to find meaning, and so on. But if machines are the only participants in This Alternate Loop, what’s driving production desire? At best, it’s something like optimization. At worst, it’s just inertia.

There’s a TON of sci-fi out there that explores endpoints of this very idea, and absolutely none of them are uplifting.

We could design systems that exclude humans from economic participation, but… why would we? This doesn’t produce any kind of world that most of us want to actually live in.

So, this isn’t a viable solution to the A.I. crisis, either.

“Robot” Solution #3: Billionaire Bunkers

The third category of “solution” is the darkest:

“Josh, the rich don’t NEED the economy to work for everyone. They have all the resources. Fewer people means fewer problems. And they don’t care about us anyway!”

I’ve seen this one paired with depopulation theories, billionaire bunkers, all of it.

Look, I get why this resonates. Wealth IS concentrating. Billionaires ARE buying islands and yes, making bunkers. And it’s not just Scrooge anymore; some tech elites DO talk about things like “reducing surplus population.” It doesn’t feel that crazy to think: “Maybe this is their plan.”

So let’s take it seriously.

If we go down this path, we end up in a place where we ask “What does a complex global civilization require to function?” because it’s that complex adaptive system which allows the ultra-wealthy to live their ultra-lavish lifestyles.

When we answer the question of what a complex global civilization needs to function, the logic of this “solution” breaks down pretty quickly, because the ultra-wealthy depend on systems that require billions of people.

Think about what it takes to maintain a billionaire lifestyle:

- Private jets require global supply chains: fuel, parts, pilots, and air traffic control.

- Luxury goods require designers, craftspeople, and logistics networks.

- Medical care requires doctors, researchers, and pharmaceutical supply chains.

- Technology requires engineers, chip fabs, rare earth mining, and electricity grids.

You can’t run modern civilization with 100 people and robots.

Feudal lords had all the land and weapons. But they couldn’t maintain Roman-level complexity with tiny elite populations. Why? Because complex systems require large populations for specialization, redundancy, and innovation. Without that, the system simplified. Less trade, less coordination, less resilience.

Modern billionaires would face the same constraint. They might survive. But they wouldn’t be able to maintain current levels of complexity. We’ll talk about this more next week.

Complex systems need the conditions that sustain complexity. Remove billions of people, and you don’t get the same civilization. You get a simpler one. So I don’t believe there’s a “depopulation plan.” But I DO think there’s a trajectory where capital optimizes for fewer workers, wealth continues to concentrate, and elites further insulate. Not because anyone’s “planning it,” but because that’s where current incentives lead.

It’s not inevitable, it’s just the currently incentivized trajectory.

Here’s the good news: trajectories aren’t destinations.

Throughout history, the ultra-wealthy often forget that endless extraction ultimately devalues their own prestige. Their wealth only means something in the container of a functioning society. Money is social technology. It requires collective belief. If society collapses, their billions are just numbers in a database nobody gives a crap about.

They need us more than they think.

And we have more power than they seem to remember.

An Exception That Proves My Point

Those are the varieties of crisis people seem to imagine. None of those possibilities quite hold up under scrutiny, but all of our collective intuition still seems to be screaming: “There is a Crisis coming, and A.I. will have something to do with it!”

I admit: my spidey sense says the same thing.

At a macro level, humanity has seen this pattern before. Multiple times. Across thousands of years. In every major civilization. Our societies organize and then reorganize, over and over.

The word “collapse” often gets invoked in these situations, but to me that word implies something that isn’t exactly happening, which is why I like this notion of “reorganization” better. We’ve talked about this kind of transition before, in relation to the Fourth Turning.

There’s a Crisis then a Reorganization. A Winter and then a Spring.

So when people ask: “Can we prevent an A.I. crisis?” I can tell you what history suggests: probably not. The reforms we desperately need — in this case: income replacement, automation dividends, public A.I. stakes, new social contracts — don’t tend to happen before the disruption.

They happen after.

After the Triangle fire. After the suffragists actually suffer. After the Great Depression. After the organizing conflict forces enough of us to choose.

Now, I want to tell you a quick story about one major exception that happened in my lifetime, but spoiler alert: I think this exception shows us exactly why pre-emption is unlikely to happen now with A.I.

In 1987, the world did something remarkable. Scientists discovered CFCs were destroying the ozone layer. Hole in the atmosphere. Massive skin cancer projections.

And the world… did something.

197 countries signed the Montreal Protocol. Collectively, we phased out 98% of ozone-killing chlorofluorocarbons. Today, the ozone layer is nearly recovered. This was a phenomenal example of global coordination and pre-empting a crisis.

I actually remember this happening. I remember hearing about CFCs in elementary school and then watching hair sprays and aerosol cans of all kinds changing before my eyes on the store shelves. Single-digit-year-old me had no concept of how extraordinary this actually was.

Double-digits-old-me now wants to know: WHY were we able to do this then? Why did it work? Here’s a few reasons:

- We had a visible threat — a hole we could see from space.

- The science was undeniable — clear mechanism, scary projections, no real controversy.

- Institutions were trusted — the UN worked, governments cooperated, people believed the science.

- Solutions existed and were profitable — alternatives to CFCs were available, industry could adapt and even benefit.

- The problem was contained — limited chemicals, specific industries, not the entire economy.

But the “when” of this problem is what I think made all the difference in its solvability: it happened during the last cycle’s High, between Summer and Fall. Late 1980s. The societal “weather” was still good. Post-WWII institutional strength still held. Global cooperation was still possible.

Now compare that to where we are with A.I. and income displacement.

- The threat is abstract — you can’t see unemployment from space. There’s no hole to point at in a photo.

- The science is contested — about A.I. capabilities, about economic impacts, social consequences, timelines. Everyone has a different prediction.

- Institutions are distrusted — government approval ratings at historic lows, international cooperation fragmented, conspiracy theories everywhere.

- Solutions are unclear and politically radioactive — UBI? Automation taxes? Public ownership of A.I.? Every option touches multiple third rails.

- The problem is foundational — not limited chemicals but the entire labor market, the social contract, how we organize society.

And we’re in Winter — late Unraveling, early Crisis. Not the High. Everything feels “cold.” Institutions are brittle, not strong.

In other words, our inability to change things right now wouldn’t be a moral failure, but a structural one.

We can’t do Montreal Protocol-style pre-emption when nobody trusts the scientists, the government, international bodies, or each other, especially not when the problem is abstract, “political,” and the solutions require completely reimagining how society works.

That’s why pre-emption worked then.

And why it probably won’t work now.

The Chaos Window

So if we can’t pre-empt, what’s the point of me telling you all this?

Because of something I call the Chaos Window.

The Chaos Window is the period between when the old system breaks down and when the new system takes hold. It’s the messy, melty transition from Winter to Spring. It’s when society experiences maximum uncertainty, and often, maximum pain.

But it’s also the season that contains maximum possibility.

And here’s what history shows: some Chaos Windows are short, and some are devastatingly long. But what makes them different isn’t luck — it’s preparation.

We’ll go into this in more depth in next week’s episode, but for now I’ll summarize with a contrasting example from Chinese dynasties.

After the Han Dynasty collapsed in 220 CE, China fragmented for 370 years before the Tang Dynasty reunified it.

After the Tang collapsed in 907 CE? Only 50 years before the Song pulled it back together.

Same pattern. Same civilization. But one Chaos Window was seven times longer.

What was the difference?

After Tang, they had recent memory of unified governance. They had models. Infrastructure. Shared frameworks.

They were prepared to rebuild.

So they didn’t spend centuries wandering. They spent 50 years.

That’s 320 years of suffering — avoided.

The actions of the leaders in the Song dynasty didn’t prevent a collapse, and in many ways I don’t think that’s the goal. The Crisis is what allows for the change, just like the decay of winter is what allows for the bloom of spring.

We can’t avoid Winter. But we can help determine how long it lasts.

The leaders of the Song dynasty DID shorten the Chaos Window.

And that’s why this work matters.

Not because we’re going to “prevent” the A.I. Crisis.

But because we’re pre-loading the solutions for when things break. Building vocabulary. Circulating frameworks. Planting ideas. So when our Chaos Window opens — when the old system visibly breaks and everyone’s asking “what now?” — some ideas are already there. Will they be perfect? No! But they will be ready to start testing.

Is it noble to prevent suffering when we can? Yes.

Is it MORE noble to shorten the duration even if you know you can’t prevent it? Maybe!

Because every year we shorten the Chaos Window is a year of:

- Fewer families destroyed

- Fewer lives derailed

- Less generational trauma

- Faster recovery for overall society

This isn’t small work. It’s not for the faint of heart. It’s civilization-level work.

And it really frickin’ matters.

We can do this, my friends.

If you’re still here, in this community, saying Hello Tomorrow with me, I’m guessing this gets you a little fired up, too.

The Optimistic Rebellion

This week’s Optimistic Rebellion is to look back in order to look forward.

Think about the New Deal. It didn’t appear from nowhere in 1933. Those ideas — progressive taxation, social insurance, labor protections — had been circulating for decades. When the Great Depression hit, frameworks were ready to deploy. That shortened their Chaos Window.

Same principle here.

“Who buys your stuff, robots?” plants a question. Fourth Turning framework gives us context. Economic systems thinking provides us with vocabulary. Every week, we are understanding the problems more and more clearly. And this allows us all to start brainstorming solutions NOW.

And all of this will help us shorten our Chaos Window when it opens.

So, when someone asks “Can we prevent an A.I. crisis?” we know the answer is probably no, but we also know the exact future isn’t inevitable. There are still SO many choices to be made between here and there, choices that change the direction of the future we will actually get to experience.

Next week, we’ll talk about the two most possible high-level scenarios I can currently envision.

But for today, our Optimistic Rebellion challenge is to start a Hello Tomorrow Brain Trust.

What does that mean?

Find 3-5 people in your network with different expertise and perspectives. Set up a monthly conversation — could be over coffee, could be a video call — just to talk about what comes next. Feel free to have everyone watch or listen to the weekly Hello Tomorrow episode and tear it apart if you like!

You’re not trying to solve everything. You’re building community and pre-loading ideas so when the Chaos Window opens, we’re not starting from zero. FDR didn’t invent his Brain Trust after the crash, he’d been developing it for years. When crisis hit, they were ready. More recently, Project 2025 did this, too — of course, I am encouraging us to move into a better future and not into some kind of retconned past, but the same principle is there.

We can do the same thing. Start the conversations now. Build the frameworks your community will need. I’m going to form my own Brain Trust too — let’s do this together!

Even if it’s just a few people meeting once a month, remember: we’re doing civilization-level work. It’s THIS work that will help us shorten our Chaos Window.

Keep the faith, my friends. The future is coming, and together we’re going to make it a great big beautiful one.