🚨 If you’d rather WATCH or LISTEN TO this article, you can!

📺 YouTube // 🎧 Spotify // 🍏 Apple

Hello Tomorrow is also now on Substack! If you’re on Substack, click here.

Most weeks we start with a wrong question, but today I want to start with the right one: “Should I be scared of A.I.?”

It’s a great question, and the answer is probably YES… but not for the reasons you might think.

I’ve noticed something alarming in the last few weeks: most people are scared of the wrong things. And many people are completely missing the things that are actually worth worrying about. Today we’re going to fix that.

I’m going to show you 3 things most people get wrong about A.I. — even really, really smart people. Here they are: 1) Why A.I. doesn’t actually “hallucinate” 2) What the actual creators of A.I. get wrong and 3) How A.I. will take jobs — because it’s already happening, just not in the way we’re afraid of… yet.

By the end of this article, you’ll know what to stop being scared of… and what to actually pay attention to.

Why A.I. Doesn’t “Hallucinate”

Let’s start with what “A.I.” means for most people right now.

For most of us, our intersection with A.I. happens through a specific kind of A.I. — a LLM, or Large Language Model. This is ChatGPT, Claude, Gemini. For the non-nerds of the world, this is almost certainly what you mean if you say “A.I.”, and the way these things work is simpler and stranger than you might realize.

LLMs work because they’re trained on enormous amounts of text — essentially everything on the internet — and they’ve learned to do one thing really well: predict the next word. That’s it. When they say poetic or deeply profound things it’s hard to believe, I know, but really… that’s all they do. They analyze patterns in language and make statistically probable guesses about what comes next. Fundamentally, this is all they do: predict the next word.

It’s an extremely sophisticated autocomplete program, y’all.

So this brings us to our first fear, and a scary sounding word that we’ve started to use as an insult to A.I.: “Hallucination.”

You’ve probably heard the word “hallucination” to describe when A.I. makes something up. And sometimes an LLM absolutely does this: it confidently tells you a fake statistic or invents a citation that doesn’t exist.

But here’s the problem: hallucination implies “malfunction.” When a human hallucinates, something has gone seriously wrong. If you’re imagining Bill Murray in your bedroom, it’s almost certainly a big problem… unless you really like Bill Murray I guess…

When an A.I. “hallucinates,” it’s actually doing exactly what it was designed to do. It’s making its best guess using plausible language based on patterns. Sometimes those predictions are accurate. Sometimes they’re confidently frickin’ wrong. But in either case, this is a system doing what it was designed to do.

But that means “hallucination” is a very bad word to describe what’s happening.

And I would argue this word is conjuring up fears that ought not be there at all.

Hallucinations in humans are dangerous and mysterious — but the factual errors of LLMs are neither of these things. A.I. isn’t a search-and-find tool or a calculator that retrieves correct answers; it’s a creative force that generates plausible ones.

This also means A.I. is more like our own brains than most of us realize. It’s basically doing what WE do: taking the data we have and making our best logical guess.

So, what would be a better word than “hallucination?” I think it’s “creativity.” It’s admittedly odd to think of our computers being creative, but we were already ascribing them psychotic conditions, so I think this is a good upgrade.

A next really good question is: how do we function in a world of creative machines? I’ll get into that in today’s Optimistic Rebellion.

For now, know that your computer is not “hallucinating.” It’s not psychotic. It might require lithium, but only for its batteries!

Where The Creators Of A.I. Go Wrong

Now let’s talk about something I see insanely smart scientists getting wrong, because this is where the fear really goes off the rails.

There’s a group of people — many are credentialed, serious, genius-level people — who take the “A.I. is getting very smart” argument and leap it immediately to “therefore A.I. will develop consciousness, become superintelligent, and become a malevolent god that enslaves or ends humanity.”

I’m going to show you a clip from Yoshua Bengio’s TED talk from last year — if you don’t know him, he is widely regarded as one of the “Godfathers Of A.I.” along with Yann LeCun and Geoffrey Hinton. Here, check it out:

This type of argument is so common right now, I want us to watch it again and I’ll point out the leap.

Whaaaaa? In a half a second we leapt from “very intelligent” to a foreboding flavor of “Terminator.”

This is the scary version of A.I. most people are actually afraid of. And I get that! I am absolutely not arguing that a Terminator isn’t frickin’ scary. I’m also not saying we shouldn’t be thoughtful about A.I. safety.

I am saying this particular jump is a leap in logic that doesn’t make sense. And I want to be precise about why this overall direction of thinking doesn’t hold up, because it’s not just one logical leap. It’s two, and both are fallacies.

Fallacy 1: Intelligence leads to malevolence.

Someone please explain to me how increased intelligence magically makes the jump to agency of any kind, specifically agency around violence. There is no logical connection between being super capable and being super murdery. I work with some of the most intelligent, capable humans on earth. Not once have I feared for my safety due to their capability.

Fallacy 1 assumes A.I. somehow develops things like goals, ego, and self-interest along with its intelligence. None of those things automatically follow increased capability. A more powerful autocomplete doesn’t automatically want to end you, it just wants to end the sentence!

The superintelligence-becomes-supervillain story is humans projecting our own psychology onto a tool — specifically, our deeply human fear of what powerful beings might do to those beneath them.

But intelligence and evil intent are completely unrelated variables. Always have been. If you want to go deeper on this idea, please go check out Mo Gawdat’s work.

Fallacy 2: Volitional language implies volitional agency.

This one is related, but even sneakier. People say A.I. “wants” things, “decides” things, “chooses” things — and then we reason from that language as if it were literally true.

But LLMs use volitional language because they were trained on human text, and WE use volitional language constantly. The model is just predicting the next word. It doesn’t have desires. The language is mimicry, not evidence of inner experience.

You’ve maybe heard stories about certain A.I.’s “wanting” to copy themselves to other servers or “make plans” to avoid deletion. This is simply language mimicking language WE would use in that situation. That’s not evidence of actual agency. How else would this kind of system answer these kinds of questions?

So, when someone says A.I. will “decide” to turn against us, they’ve already made this mistake. They’ve taken the language A.I. might use to describe itself and treated it as evidence of what A.I. actually IS. That’s like reading a novel and concluding the author must be a monomaniacal 19th century whaling boat captain.

I’m not going to tell you A.I. definitely isn’t or never will be “conscious.” Consciousness is a genuinely hard philosophical question nobody has fully answered even about humans. What I will tell you is that even if it were conscious, that still doesn’t get us to “supervillain.” Consciousness and malevolence are separate properties. But the scary A.I. requires both, and there’s just no logical argument connecting them.

So, is there something that we should be afraid of? Abso-freakin-lutely: humans using A.I. to do bad sh*t. Humans using A.I. to concentrate power. Humans using A.I. to make things that shouldn’t be made. This is why I made a whole episode about wisdom.

The villain in every dystopian A.I. story, if you look carefully, doesn’t ever start with the technology… it starts with the people who control it. That’s an Organizing Story problem — which is what we talk about on this show pretty much every week.

Humans are very much in a race to create superior A.I. That should scare us, for the same reasons any kind of “arms race” should scare us. But you need to know: A.I. itself isn’t in a race with humanity about anything. It’s simply doing what we programmed it to do.

The Invisible Layoff Has Just Begun

OK so if A.I. isn’t a psychotic hallucinating supervillain — what the frick is it doing to the workplace? Because something… weird is clearly happening there, and most of us feel it even if we can’t name it.

Almost every leader I talk to is being asked to integrate A.I. into their work. What exactly does that mean? And for what purpose? Nobody’s terribly clear about that! But that ain’t stopping it. So that’s… odd, but pretty par for the course actually with new tech that gets this much buzz.

But what’s happening with things like workload and jobs and layoffs?

It’s easy to miss what’s actually happening right now because we humans have a tendency to wait for the dramatic version. The climactic Hollywood explosion as the hero walks away. In this case, that might be robots taking over entire departments. Mass layoffs. Millions unemployed overnight.

That’s clearly not happening — at least not yet, you know I do believe a bigger Crisis is coming — but what’s going on right now?

What’s happening now is simultaneously boring and alarming.

There’s a concept out there called the Jevons Paradox. In 1865, British economist William Stanley Jevons argued that more efficient steam engines wouldn’t reduce coal consumption… they’d increase it. Because when something gets cheaper and easier, we just do more of it.

This idea is extremely important for many things: the number of lanes on the freeway, energy consumption, and pretty much all of our planetary boundaries, which we talked briefly about last week, but it’s also relevant for A.I.!

Just a few days ago, ActivTrak released their 2026 State Of The Workplace report. They analyzed 443 million hours of work activity across 160,000 employees and found that after people adopted A.I., every single work category went up. Email up 104%. Chat up 145%. Nothing decreased. Saturday productive hours up 46% over three years. Working on Sunday up 58%.

A.I. doesn’t save time. It creates capacity. And capitalism immediately fills that capacity with more work. Because of course it does. This is Jevons Paradox in the workplace, and it surprises exactly zero people working. You’ve seen this pattern before, but I am here to warn you: you’ve never seen it quite like this.

My prediction is, almost universally across white collar sectors, what’s about to happen is this: organizations will try to NOT do dramatic layoffs. But they won’t replace people who leave, either. Someone retires; no backfill. Positions they planned to create won’t get created. Someone resigns; restructure around the gap. Golden parachute programs that try to stay small and off the radar. Contract workers get expirations instead of renewals. Headcount shrinks, quietly. The people who are left absorb the work. A.I. + “remaining humans racing to figure it out” will fill the capacity gap.

I call this The Invisible Layoff.

I suspect it’ll stay this way until the actual Crisis.

Anthropic — the company that makes Claude, one of the A.I.’s I use — just published a rigorous labor market study using government data and their own usage data, confirming this direction. No systematic increase in unemployment. But hiring into A.I.-exposed occupations has slowed significantly for workers aged 22 to 25 — down about 14% since 2022. The door isn’t slamming shut, it’s quietly closing, especially for people just starting their careers.

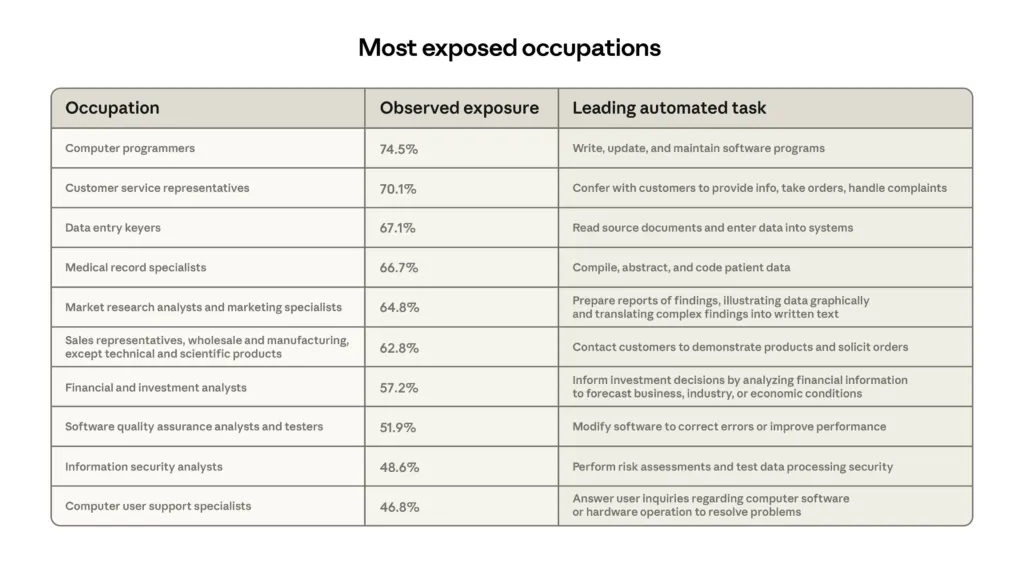

Back in Episode 18 — “Who Buys Your Stuff, Robots?” — I argued that the A.I. job crisis would come for white-collar workers first. Look at the ten most A.I.-exposed occupations in the study. Not a single factory worker. Not a single tradesperson. Every single one of these is a desk job, a knowledge job.

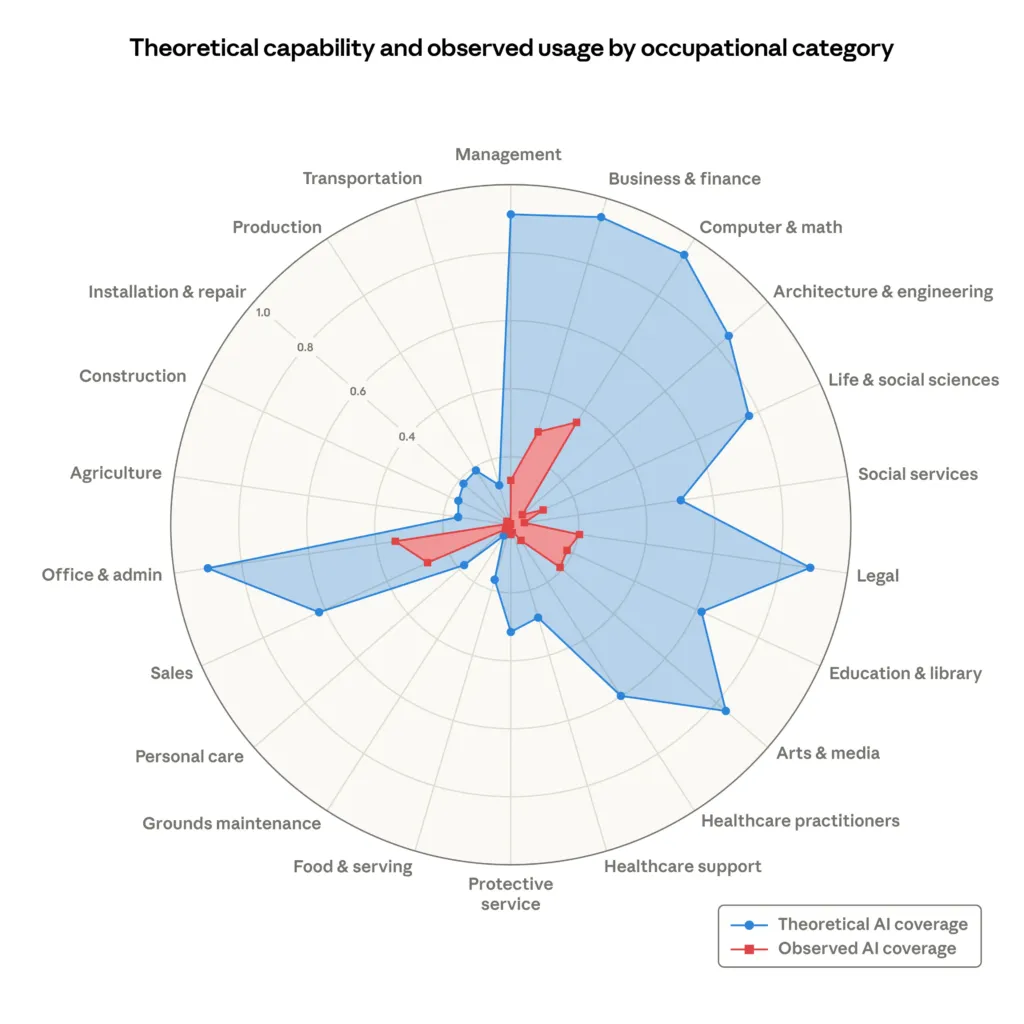

This same study also published a radar chart showing the theoretical capability of A.I. versus actual observed usage today. The blue area is what A.I. could do right now. The red area is what it’s actually doing.

The gap is enormous.

So, when you hear in your job to “start using A.I.” let’s be clear… that is the gap your executives want to fill with A.I. if they can. This isn’t malevolence, either; it’s just capitalism doing the only thing it knows to do: optimize to make more capital.

We are in the first chapter of this story.

Everything we’ve talked about — The Invisible Layoff, the Jevons acceleration, the slowing hiring of young workers, the white collar disruption — is all happening while A.I. is still warming up. When that red area starts filling the blue, the effects will get orders of magnitude larger.

Should we be afraid of that? Yes. Genuinely.

But let’s be scared in a productive way — because right now, there’s still time. We can be thoughtful, prepare, influence policy… you choose; where can YOU be most productive and influential?

Leadership Lens

If you’re a leader in an organization, here’s your Leadership Lens for today:

First — find out what your people are actually doing with A.I.

In a survey of their own customers — organizations already paying attention to workforce data — ActivTrak found that half still don’t measure A.I.’s impact on their workforce at all. My personal experiences confirm this: many organizations aren’t doing nearly enough to know what’s happening with A.I. inside their company. You cannot lead what you cannot see. Before you make a strategy, you need visibility. Where is A.I. being used? By whom? To what impact?

Second — actively help redesign roles.

A.I. basically commoditizes the middle of every knowledge work task — the making of the thing — which means most of your team members’ value-adding tasks move to the edges: setting context, providing direction, verifying output, exercising judgment. That’s where human value lives now. As you’re redesigning roles, be especially thoughtful about entry-level positions. The Anthropic data says the door is quietly closing for young workers. If you’re not intentionally designing pathways for new employees to develop judgment and wisdom, you’re going to hollow out your own talent pipeline.

Third — be the person who replaces fear with clarity.

Your people are asking the same question this episode is answering. They’re scared of the wrong things and the right things simultaneously. They need you to have a framework. Not all the answers. A framework. Confusion has a cost. When people don’t understand the world they’re operating in, they freeze, disengage, and burn out. Replacing fear with clarity is your most important leadership task. Want an easy way to do that? Share this article!

The Optimistic Rebellion

For all of us, here’s our Optimistic Rebellion.

Should we be scared of A.I.? Not in many of the ways we currently are. Not of hallucinations. Not of Terminators. Not of overnight mass unemployment. Those fears are built on broken logic and bad data. You can let those go.

But we should be scared — productively scared — of the Jevons Paradox. Of The Invisible Layoff. Of the enormous gap between what A.I. can already do and what it’s currently doing.

What do we do with that fear? Here are 3 practical skills; this week I challenge you to pick one and actually practice it:

1. Get more curious. At the beginning of this episode I told you I’d tell you how to function in a world of creative machines. This is what you do. It’s much like working with a creative human — give their creativity context and direction and ask great freaking questions.

2. Get more clear. When jobs move from the making of the thing to the setting up and verifying the thing, we need to get ruthlessly clear on our destination before we let A.I. start driving. Clarity is a competence we all need to build.

3. Slow the F down. I know this feels counterintuitive when everything feels like it’s getting faster. But that’s exactly why it’s the rebellious choice. We talked about this a few weeks ago: wisdom requires space and restraint. Speed is what machines are good at. Wisdom is what humans are good at. Slow the F down so your intuition has enough space to work.

The right response to A.I. isn’t panic. It’s not dismissal either. It’s clear-eyed, curious, unhurried engagement with what’s actually happening — so you can help shape what comes next.

Get curious. Get clear. Slow down.

When we do this, we can be just afraid enough to keep paying attention, but not so scared we can’t think straight. Maybe that’s always the best way to participate in an Optimistic Rebellion.